Model Evaluation And Benchmarking Tools Market Size 2026-2030

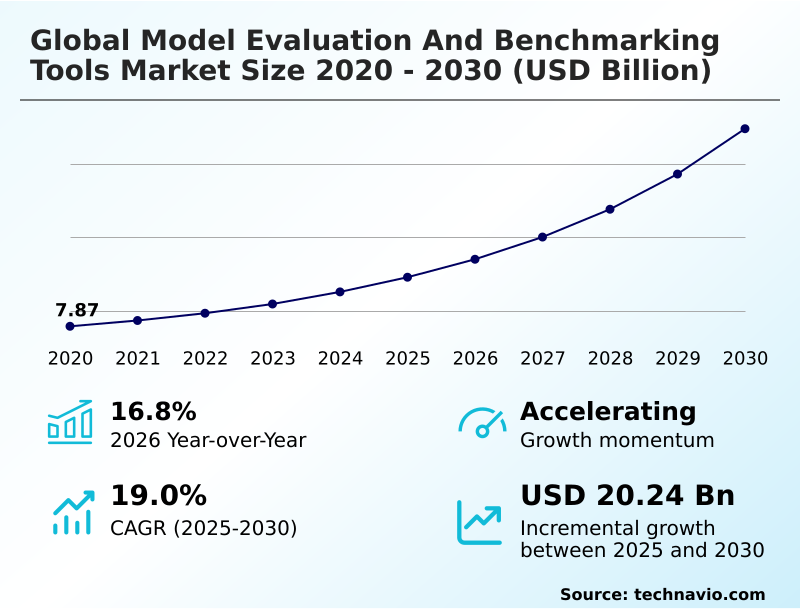

The model evaluation and benchmarking tools market size is valued to increase by USD 20.24 billion, at a CAGR of 19% from 2025 to 2030. Industrialization of standard compliance and mandatory safety audits will drive the model evaluation and benchmarking tools market.

Major Market Trends & Insights

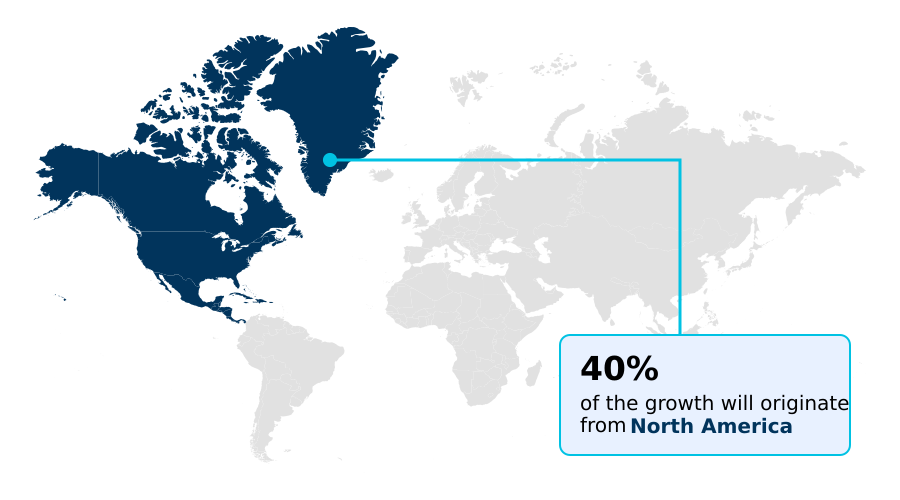

- North America dominated the market and accounted for a 40.1% growth during the forecast period.

- By Component - Software or platforms segment was valued at USD 7.75 billion in 2024

- By Deployment - On-premises segment accounted for the largest market revenue share in 2024

Market Size & Forecast

- Market Opportunities: USD 26.93 billion

- Market Future Opportunities: USD 20.24 billion

- CAGR from 2025 to 2030 : 19%

Market Summary

- The model evaluation and benchmarking tools market is undergoing rapid industrialization as generative AI transitions from experimental labs to mission-critical enterprise functions. This growth is driven by the need for a multidimensional validation framework that moves beyond simple accuracy metrics.

- Current evaluation strategies encompass a spectrum of tests, including adversarial red teaming, bias detection, and reasoning integrity assessments, to ensure autonomous agents operate within strict safety and operational boundaries. The shift towards agentic AI, where models perform complex, multi-step tasks, has intensified the demand for standardized, reproducible benchmarking.

- A key trend is the rise of sovereign evaluation standards, as national governments mandate safety frameworks to align with local policies. For instance, a financial services firm must now deploy tools that not only benchmark algorithmic trading model performance but also provide an auditable trail for compliance with new AI acts, ensuring fairness in automated loan decisions.

- However, the market faces challenges from the inherent opacity of non-deterministic models and the technical debt created by inconsistent validation outcomes, which can erode trust and slow adoption.

What will be the Size of the Model Evaluation And Benchmarking Tools Market during the forecast period?

Get Key Insights on Market Forecast (PDF) Request Free Sample

How is the Model Evaluation And Benchmarking Tools Market Segmented?

The model evaluation and benchmarking tools industry research report provides comprehensive data (region-wise segment analysis), with forecasts and estimates in "USD million" for the period 2026-2030, as well as historical data from 2020-2024 for the following segments.

- Component

- Software or platforms

- Services

- Deployment

- On-premises

- Cloud-based

- Hybrid

- Industry application

- BFSI

- Healthcare and life sciences

- IT and telecommunications

- Retail and e-commerce

- Others

- Geography

- North America

- US

- Canada

- Mexico

- Europe

- Germany

- UK

- France

- APAC

- China

- Japan

- India

- South America

- Brazil

- Argentina

- Middle East and Africa

- UAE

- Saudi Arabia

- South Africa

- Rest of World (ROW)

- North America

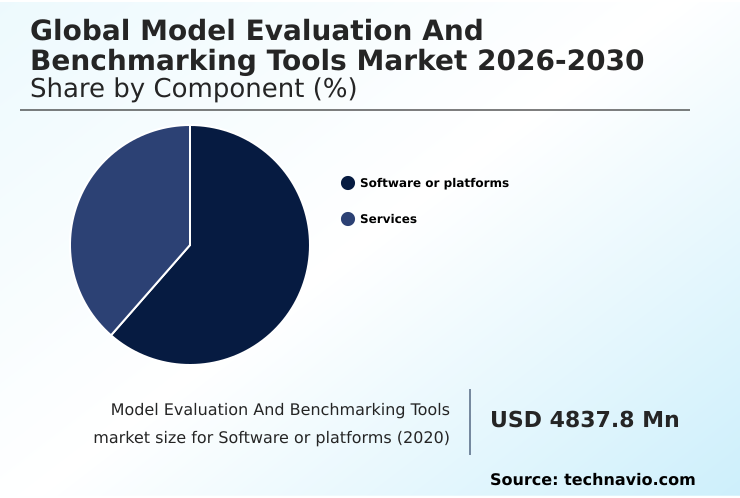

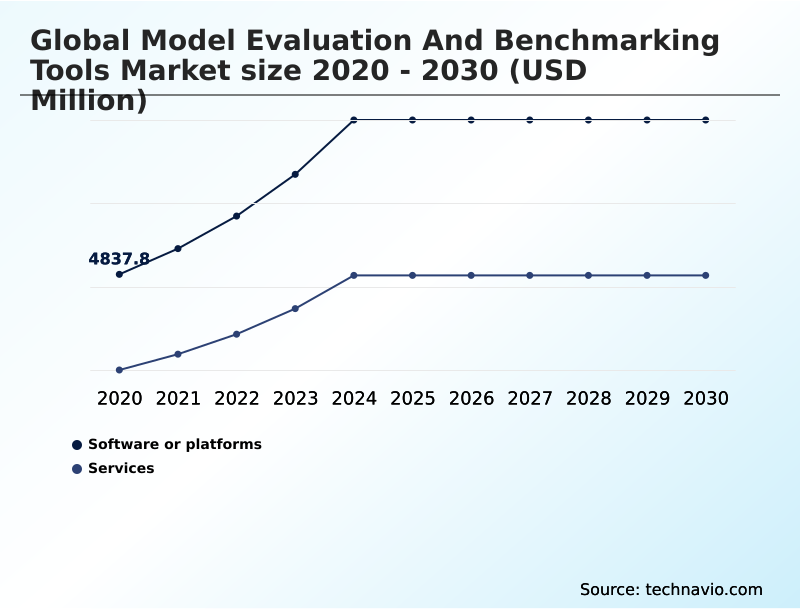

By Component Insights

The software or platforms segment is estimated to witness significant growth during the forecast period.

The software or platforms segment is the technological core of the market, providing the automated frameworks for measuring AI performance, safety, and reliability.

As enterprises shift to production-grade deployments, demand for robust software offering standardized, repeatable benchmarks across architectures has grown.

These platforms integrate automated bias detection and facilitate continuous model evaluation, covering metrics like accuracy and latency through algorithmic scoring and model-based grading.

The pivot to agentic AI necessitates advanced behavioral simulation platforms for agentic workflow evaluation and autonomous agent benchmarking.

In response to regulatory pressures, these platforms are incorporating automated conformity assessments and data drift detection to provide clear audit trails, ensuring AI assets meet technical and ethical standards.

Over 68% of enterprise workflows now integrate such modules to satisfy demands for transparency.

The Software or platforms segment was valued at USD 7.75 billion in 2024 and showed a gradual increase during the forecast period.

Regional Analysis

North America is estimated to contribute 40.1% to the growth of the global market during the forecast period.Technavio’s analysts have elaborately explained the regional trends and drivers that shape the market during the forecast period.

See How Model Evaluation And Benchmarking Tools Market Demand is Rising in North America Request Free Sample

The geographic landscape is led by North America, which is projected to account for over 40% of the market's incremental growth, driven by a mature ecosystem of cloud providers and AI research labs in the United States and Canada.

This region focuses on specialized, task-oriented benchmarks for agentic workflow evaluation and catastrophic risk monitoring.

Europe is a highly structured market, distinguished by its commitment to data sovereignty and regulations like the EU AI act, which mandates explainable AI modules and algorithmic fairness monitoring for high-risk systems.

Meanwhile, the APAC region, particularly China, India, and Japan, is a critical growth engine fueled by industrial-scale AI integration.

This region shows accelerated adoption of cross-modal validation and human-in-the-loop evaluation to support massive deployments in manufacturing and consumer electronics, with over 60% of regional tech firms now using these methods.

Market Dynamics

Our researchers analyzed the data with 2025 as the base year, along with the key drivers, trends, and challenges. A holistic analysis of drivers will help companies refine their marketing strategies to gain a competitive advantage.

- The expanding scope of model evaluation for generative AI is pushing enterprises to adopt more sophisticated validation strategies. Benchmarking tools for autonomous agents are becoming essential as companies move beyond static tests to performance benchmarking for agentic workflows, which require tools for AI agent planning to assess complex, multi-step reasoning.

- This shift is complicated by the need for robust hallucination detection in production AI and real-time model performance monitoring to manage the risks of non-deterministic systems. Fairness and bias auditing tools are now a standard requirement, particularly in regulated industries where explainable AI for financial models provides necessary transparency.

- The rise of sovereign AI evaluation and validation mandates means that compliance reporting for high-risk AI is no longer optional. In response, organizations are investing in automated red-teaming for LLMs and comprehensive security vulnerability testing for AI. This includes evaluating multi-modal AI systems and using synthetic data for model testing to cover edge cases.

- Furthermore, model drift detection and alerting through production AI observability solutions has become critical for maintaining performance. Platforms that offer cost-performance benchmarking for models are seeing higher adoption, as enterprises that implement continuous integration for ML models report significantly faster deployment cycles compared to those using manual validation.

- The ecosystem also includes AI safety and alignment benchmarks and solutions for model evaluation for edge AI.

What are the key market drivers leading to the rise in the adoption of Model Evaluation And Benchmarking Tools Industry?

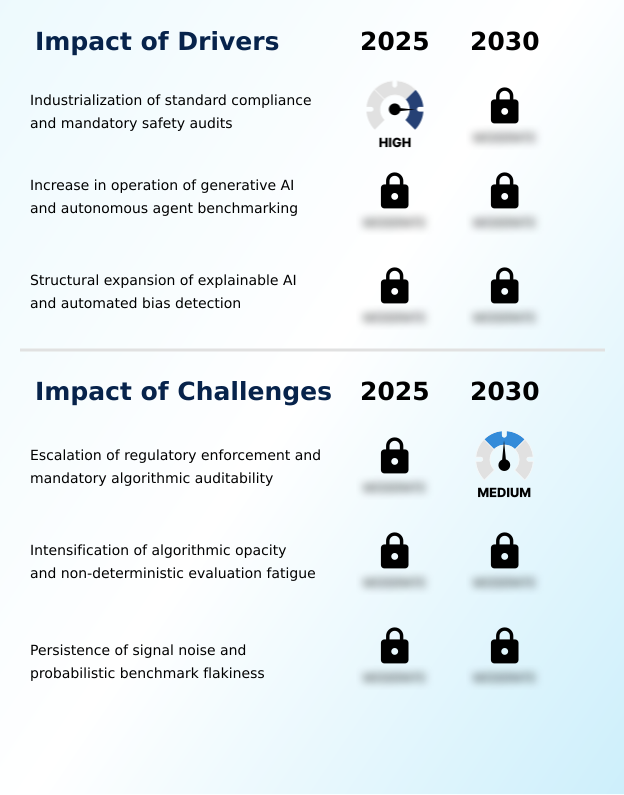

- The industrialization of standard compliance, driven by stringent regulatory frameworks and mandatory safety audits, is a key driver for market growth.

- Key market drivers are reshaping the industry, led by the industrialization of standard compliance and mandatory safety audits.

- The enforcement of regulations in regulated digital environments has made continuous model evaluation a legal prerequisite, transforming complex mandates into audit-ready workflows that leverage automated compliance reporting.

- The rapid transition toward generative AI and autonomous agent benchmarking is another major driver, as enterprises now require tools for reasoning trace analysis and performance measurement of agents in complex, multi-step tasks.

- The explainable AI sector, which reached a valuation over $11 billion, is also propelling the market, with explainable ai modules integrated into 68% of enterprise workflows.

- This demand for automated bias detection and counterfactual explanations is driven by the need to build human trust.

What are the market trends shaping the Model Evaluation And Benchmarking Tools Industry?

- A primary market trend is the institutionalization of agentic benchmarking and the deployment of multi-turn reasoning validation. This reflects a shift toward systemic evaluation of autonomous agents over underlying base models.

- A primary trend is the shift toward systemic evaluation, where the focus is on autonomous agents rather than base models. This move necessitates frameworks for multi-turn reasoning validation and behavioral assessments, leading to the deprecation of older benchmarks.

- A second key trend is the growth of sovereign evaluation standards and policy-driven safety frameworks, which require independent third-party auditing and auditable evidence of compliance with high-risk system requirements. This fosters a market for tools specializing in responsible AI governance. Finally, the expansion of multimodal and economic proving grounds reflects a move toward utilitarian evaluation.

- This trend uses cross-modal validation to test systems across diverse data types, solving the benchmark saturation problem and focusing on measurable business productivity.

What challenges does the Model Evaluation And Benchmarking Tools Industry face during its growth?

- The escalation of regulatory enforcement and the increasing burden of mandatory algorithmic auditability present a key challenge to the market.

- The market faces significant challenges, primarily from intensifying regulatory scrutiny and the administrative burden of algorithmic auditability. Nearly 70% of large enterprises have adopted formal governance checklists to mitigate legal risks, placing immense financial pressure on providers to integrate sophisticated monitoring through model observability platforms.

- Another structural challenge is the inherent opacity of non-deterministic model evaluation, leading to inconsistent validation outcomes and evaluation fatigue among technical teams. The recent model avalanche, where multiple frontier models were released in a single week, highlights how innovation speed has outpaced the capacity of existing benchmarks to provide reliable metrics.

- Finally, the persistence of benchmark flakiness erodes trust, as failures from adversarial red teaming can stem from subtle prompt shifts, making it difficult to distinguish genuine regressions from statistical variance.

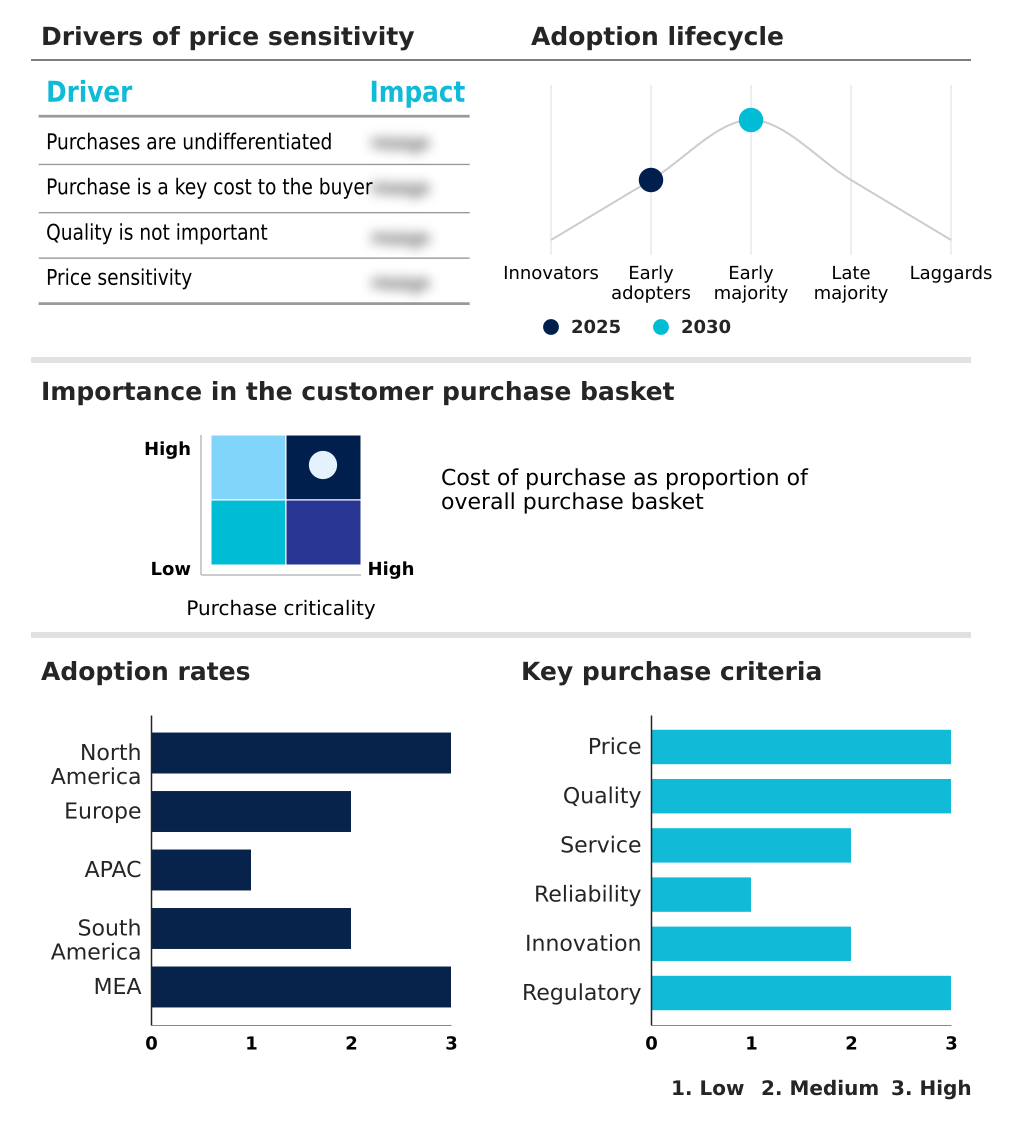

Exclusive Technavio Analysis on Customer Landscape

The model evaluation and benchmarking tools market forecasting report includes the adoption lifecycle of the market, covering from the innovator’s stage to the laggard’s stage. It focuses on adoption rates in different regions based on penetration. Furthermore, the model evaluation and benchmarking tools market report also includes key purchase criteria and drivers of price sensitivity to help companies evaluate and develop their market growth analysis strategies.

Customer Landscape of Model Evaluation And Benchmarking Tools Industry

Competitive Landscape

Companies are implementing various strategies, such as strategic alliances, model evaluation and benchmarking tools market forecast, partnerships, mergers and acquisitions, geographical expansion, and product/service launches, to enhance their presence in the industry.

Amazon Web Services Inc. - Offers integrated tools for performance benchmarking, explainability, and bias detection, facilitating reliable and compliant AI model deployment throughout the enterprise lifecycle.

The industry research and growth report includes detailed analyses of the competitive landscape of the market and information about key companies, including:

- Amazon Web Services Inc.

- Arize AI Inc.

- ArthurAI Inc.

- Credo AI

- Databricks Inc.

- DataRobot Inc.

- Evidently AI

- Fiddler AI

- Galileo

- Google LLC

- Hugging Face Inc.

- Labelbox

- LangChain Inc.

- Microsoft Corp.

- Neptune Labs Inc.

- OpenAI

- Scale AI

- Valohai Oy

Qualitative and quantitative analysis of companies has been conducted to help clients understand the wider business environment as well as the strengths and weaknesses of key industry players. Data is qualitatively analyzed to categorize companies as pure play, category-focused, industry-focused, and diversified; it is quantitatively analyzed to categorize companies as dominant, leading, strong, tentative, and weak.

Recent Development and News in Model evaluation and benchmarking tools market

- In October 2024, LangWatch launched its Agent Simulation Engine, enabling developers to simulate realistic user interactions and complex task flows to identify model regressions before production deployment.

- In November 2024, the Indian Ministry of Electronics and Information Technology inaugurated the AI Safety Institute, establishing it as the primary national body for validating foundational models under the India AI Governance framework.

- In January 2025, Arize AI Inc. released a major update to its Arize AX platform, introducing a centralized Evaluator Hub for creating and deploying reusable, version-controlled evaluators across experiments.

- In April 2025, MLCommons released the MLPerf Inference v6.0 benchmark suite, introducing the industry’s first open-weight large language model benchmark for mathematics and coding and new text-to-video generation benchmarks.

Dive into Technavio’s robust research methodology, blending expert interviews, extensive data synthesis, and validated models for unparalleled Model Evaluation And Benchmarking Tools Market insights. See full methodology.

| Market Scope | |

|---|---|

| Page number | 297 |

| Base year | 2025 |

| Historic period | 2020-2024 |

| Forecast period | 2026-2030 |

| Growth momentum & CAGR | Accelerate at a CAGR of 19% |

| Market growth 2026-2030 | USD 20243.5 million |

| Market structure | Fragmented |

| YoY growth 2025-2026(%) | 16.8% |

| Key countries | US, Canada, Mexico, Germany, UK, France, Italy, Spain, The Netherlands, China, Japan, India, South Korea, Australia, Indonesia, Brazil, Argentina, Chile, UAE, Saudi Arabia, South Africa, Israel and Turkey |

| Competitive landscape | Leading Companies, Market Positioning of Companies, Competitive Strategies, and Industry Risks |

Research Analyst Overview

- The model evaluation and benchmarking tools market is pivoting toward comprehensive validation frameworks to address the complexity of modern AI. The integration of explainable AI modules has become standard, with data showing 68% of enterprise workflows now include them to satisfy demands for transparency. This trend directly informs boardroom decisions on risk management and compliance strategy, particularly in regulated sectors.

- Core technologies now include agentic workflow evaluation and multi-turn reasoning validation to assess autonomous systems. Techniques like adversarial red teaming, automated bias detection, and hallucination detection are essential for managing operational risks. The use of model-as-a-judge frameworks and large-language-model-based evaluation provides scalable alternatives to manual human-in-the-loop evaluation.

- To ensure robustness, developers employ synthetic data assessment, regression testing, and performance drift detection as part of continuous model evaluation. The emergence of sovereign AI initiatives and the need for responsible AI governance are driving demand for evaluation-as-a-service platforms that support red-teaming protocols, bias detection modules, and cross-modal validation against economic benchmarks to ensure both safety and business value.

- This includes checks for prompt injection resilience and counterfactual explanations with clear feature-importance visualizations.

What are the Key Data Covered in this Model Evaluation And Benchmarking Tools Market Research and Growth Report?

-

What is the expected growth of the Model Evaluation And Benchmarking Tools Market between 2026 and 2030?

-

USD 20.24 billion, at a CAGR of 19%

-

-

What segmentation does the market report cover?

-

The report is segmented by Component (Software or platforms, and Services), Deployment (On-premises, Cloud-based, and Hybrid), Industry Application (BFSI, Healthcare and life sciences, IT and telecommunications, Retail and e-commerce, and Others) and Geography (North America, Europe, APAC, South America, Middle East and Africa)

-

-

Which regions are analyzed in the report?

-

North America, Europe, APAC, South America and Middle East and Africa

-

-

What are the key growth drivers and market challenges?

-

Industrialization of standard compliance and mandatory safety audits, Escalation of regulatory enforcement and mandatory algorithmic auditability

-

-

Who are the major players in the Model Evaluation And Benchmarking Tools Market?

-

Amazon Web Services Inc., Arize AI Inc., ArthurAI Inc., Credo AI, Databricks Inc., DataRobot Inc., Evidently AI, Fiddler AI, Galileo, Google LLC, Hugging Face Inc., Labelbox, LangChain Inc., Microsoft Corp., Neptune Labs Inc., OpenAI, Scale AI and Valohai Oy

-

Market Research Insights

- The market is defined by a pivot towards rigorous, automated validation frameworks. The need for sovereign evaluation standards and policy-driven safety frameworks is compelling enterprises to adopt comprehensive model governance checklists; industry data shows nearly 70% of large firms now use these to mitigate non-compliance risks under new AI acts.

- This shift necessitates AI safety benchmarks and automated compliance reporting to provide immutable audit trails. As organizations implement managed evaluation-as-a-service solutions, they gain access to specialized evaluation framework design and independent third-party auditing. These services improve model integrity, with some platforms demonstrating a 30% reduction in model hallucination rates, directly enhancing reliability in production environments.

We can help! Our analysts can customize this model evaluation and benchmarking tools market research report to meet your requirements.

RIA -

RIA -