Ai Inference Optimization Software Market Size and Growth Forecast 2026-2030

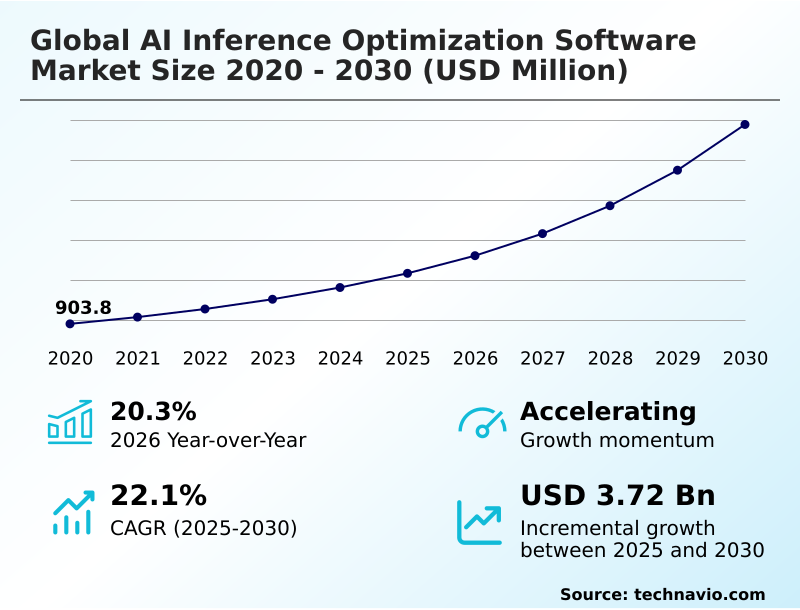

The Ai Inference Optimization Software Market size was valued at USD 2.17 billion in 2025 growing at a CAGR of 22.1% during the forecast period 2026-2030.

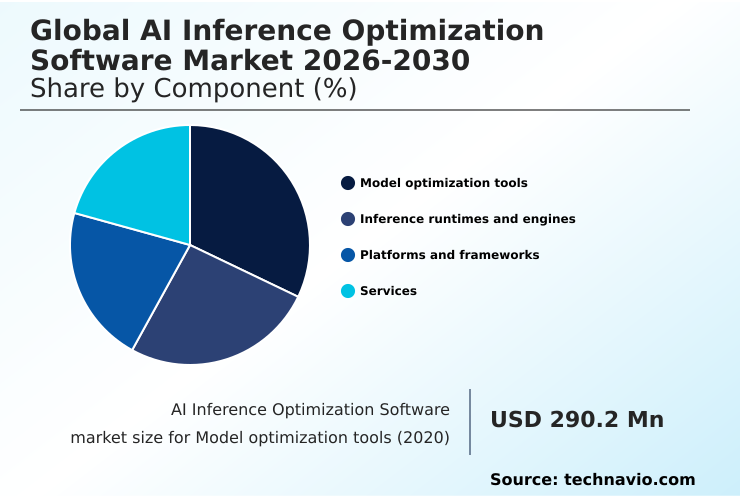

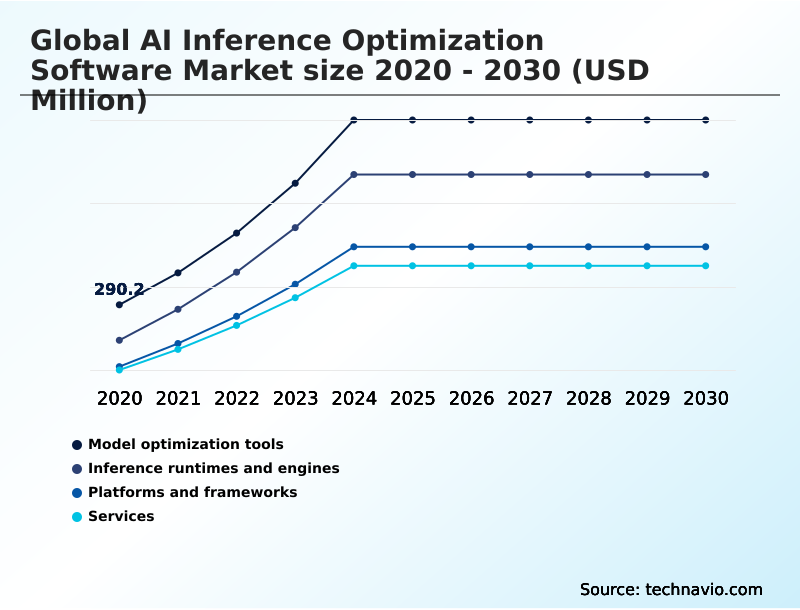

North America accounts for 39.6% of incremental growth during the forecast period. The Model optimization tools segment by Component was valued at USD 582.4 million in 2024, while the Cloud based segment holds the largest revenue share by Deployment.

The market is projected to grow by USD 4.98 billion from 2020 to 2030, with USD 3.72 billion of the growth expected during the forecast period of 2025 to 2030.

Get Key Insights on Market Forecast (PDF) Request Free Sample

Ai Inference Optimization Software Market Overview

The AI inference optimization software market is defined by the imperative to bridge the gap between large, computationally-intensive neural networks and the practical constraints of production environments. Growth is driven by the need for efficient ai in data centers and on resource-limited hardware, with North America accounting for nearly 40% of the incremental growth. Core to this is managing generative ai deployment costs and enabling ai inference on cpu. For instance, a smart factory implementing real-time computer vision for quality control on a production line uses optimization software for ai workload orchestration, deploying pruned models on embedded ai systems to detect defects without cloud latency. This on-premise ai inference capability, governed by standards like the Open Neural Network Exchange (ONNX) for interoperability, is crucial for improving inference server throughput and operational efficiency, directly impacting manufacturing yields and reducing waste.

Drivers, Trends, and Challenges in the Ai Inference Optimization Software Market

Strategic decision-making in the global AI inference optimization software market is increasingly influenced by the dual pressures of performance requirements and regulatory compliance. The challenge of deploying large language models on edge devices necessitates advanced software tools for optimizing generative ai, moving beyond brute-force hardware scaling.

For instance, an automotive OEM in the EU, navigating the stringent requirements of the upcoming EU AI Act, must use quantization-aware training for accuracy preservation in its advanced driver-assistance systems to ensure safety-critical reliability.

This involves a detailed cost-benefit analysis of on-premise vs cloud ai inference, where on-device processing can reduce latency by more than 50% compared to a round trip to a distant cloud server, a critical factor for real-time decision-making.

The performance comparison of ai inference runtimes becomes a key part of vendor qualification, with a focus on cross-platform deployment using onnx runtime to mitigate the impact of hardware heterogeneity on ai performance and avoid vendor lock-in. These best practices for ai model optimization are fundamental to reducing tco for large-scale ai deployment and ensuring long-term viability.

Primary Growth Driver: The escalating demand for real-time data processing at the network edge, driven by latency-sensitive applications in autonomous systems and IoT, serves as a primary catalyst for the market.

The market's momentum is fueled by the escalating demand for real-time ai processing at the network edge and the explosive growth of generative AI.

Enterprises are driven to adopt optimization software to manage generative ai deployment costs, which can be prohibitive at scale. This has intensified the focus on techniques that enable low-latency llm serving and efficient on-device machine learning.

In practice, deploying ai for industrial iot applications, such as predictive maintenance sensors in a factory, requires models optimized for minimal power consumption and memory footprint.

This need is amplified by corporate sustainability goals, pushing organizations to adopt energy-efficient neural networks to reduce their overall carbon footprint, a consideration now integral to IT procurement and strategy.

Emerging Market Trend: The proliferation of hardware-agnostic compilation and interoperability standards is reshaping model deployment strategies, enabling performance optimization across diverse silicon architectures without vendor lock-in.

A defining trend is the move toward hardware-agnostic compilation, which decouples model development from specific silicon, a significant factor in a market exhibiting a year-over-year growth of 20.3%. This allows for seamless cross-platform ml deployment using a unified hardware abstraction layer for ai.

Enterprises are leveraging advanced tools like the Multi-Level Intermediate Representation (MLIR) as a common compiler for deep learning, enabling a single optimized model to be deployed across diverse hardware environments. This approach is critical for firms adopting multi-cloud strategies, as it ensures consistent performance and simplifies the management of ai model serving frameworks.

The focus has shifted from hardware-specific tuning to creating portable, high-performance artifacts that can run anywhere from data centers to the edge.

Key Industry Challenge: The persistence of hardware heterogeneity and interoperability barriers creates significant engineering challenges, hindering the development of universally efficient and portable AI solutions across the industry.

A primary market challenge stems from the persistence of hardware heterogeneity, which complicates deployment and increases total cost of ownership. The lack of a universal hardware abstraction layer for ai means that optimization software must support a fragmented ecosystem of processors, from CPUs and GPUs to specialized AI accelerators.

This issue is particularly acute when dealing with the challenges in optimizing sparse neural networks, which have different computational patterns than dense models. As a result, engineering teams must use a variety of software tools for optimizing generative ai, often creating bespoke solutions for each deployment target.

This fragmentation, underscored by initiatives like the Unified Acceleration Foundation seeking to improve portability, remains a significant barrier to achieving a truly unified and efficient AI development lifecycle.

Explore Full Market Dynamics Analysis Request Free Sample

Ai Inference Optimization Software Market Segmentation

The ai inference optimization software industry research report provides comprehensive data including region-wise segment analysis, with forecasts and analysis for the period 2026-2030, as well as historical data from 2020-2024 for the following segments.

Component Segment Analysis

The model optimization tools segment is estimated to witness significant growth during the forecast period.

Model optimization tools represent a foundational market segment, addressing the critical need to adapt complex neural networks for operational deployment.

Procurement decisions in this area focus on techniques like model quantization and neural network pruning to reduce computational and memory footprints.

These tools are essential for managing the inherent latency vs accuracy tradeoff, enabling ai model deployment on diverse hardware, from data centers to edge devices.

Within an enterprise setting, a data science team at a financial services firm might use knowledge distillation to create a low-latency fraud detection model from a larger, more complex parent, ensuring real-time transaction analysis without prohibitive hardware costs.

This segment's activity, which accounts for a significant portion of the market, underscores the shift from model training to efficient, scalable inference.

The Model optimization tools segment was valued at USD 582.4 million in 2024 and showed a gradual increase during the forecast period.

Ai Inference Optimization Software Market by Region: North America Leads with 39.6% Growth Share

North America is estimated to contribute 39.6% to the growth of the global market during the forecast period.

The geographic landscape is led by North America, which is forecast to contribute approximately 39.6% of the market's growth, driven by its dense concentration of hyperscale data centers and AI research hubs.

In this region, optimizing transformer models for applications in finance and healthcare is a primary focus. Europe follows, with an emphasis on industrial applications and adherence to the EU AI Act, promoting investment in on-premise ai inference solutions.

Meanwhile, APAC is the fastest-growing region, with a significant demand for on-device machine learning and mobile ai performance to serve its vast consumer base.

In a typical use case, an automotive manufacturer in Germany deploys optimized models for ai in autonomous systems, leveraging federated learning optimization to improve performance without centralizing sensitive driving data, ensuring compliance and enhancing vehicle safety.

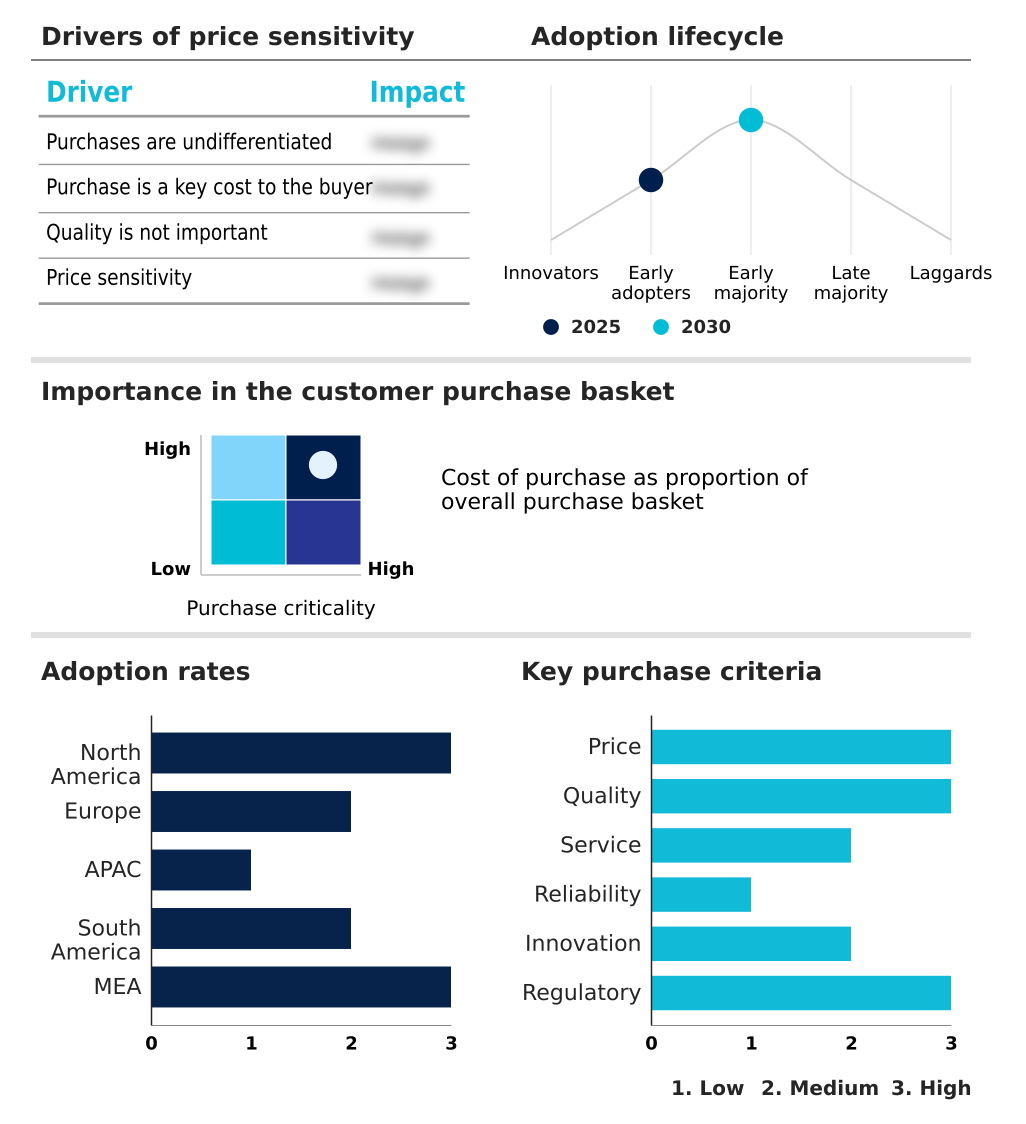

Customer Landscape Analysis for the Ai Inference Optimization Software Market

The ai inference optimization software market forecasting report includes the adoption lifecycle of the market, covering from the innovator’s stage to the laggard’s stage. It focuses on adoption rates in different regions based on penetration. Furthermore, the ai inference optimization software market report also includes key purchase criteria and drivers of price sensitivity to help companies evaluate and develop their market growth analysis strategies.

Competitive Landscape of the Ai Inference Optimization Software Market

Companies are implementing various strategies, such as strategic alliances, partnerships, mergers and acquisitions, geographical expansion, and product/service launches, to enhance their presence in the ai inference optimization software market industry.

Advanced Micro Devices Inc. - Offerings include integrated software stacks and AI development toolkits for model optimization, quantization, and deployment targeting proprietary GPU and AI processor hardware for enhanced performance.

The industry research and growth report includes detailed analyses of the competitive landscape of the market and information about key companies, including:

- Advanced Micro Devices Inc.

- Amazon.com Inc.

- Blaize

- Cerebras Systems Inc.

- Edgeimpulse Inc.

- Google LLC

- Graphcore Ltd.

- Groq Inc.

- Hailo Technologies Ltd.

- Hugging Face Inc.

- IBM Corp.

- Intel Corp.

- Latent AI Inc.

- Microsoft Corp.

- Modular Inc.

- NVIDIA Corp.

- Qualcomm Inc.

- Red Hat Inc.

- SambaNova Systems Inc.

- Syntiant Corp.

Qualitative and quantitative analysis of companies has been conducted to help clients understand the wider business environment as well as the strengths and weaknesses of key industry players. Data is qualitatively analyzed to categorize companies as pure play, category-focused, industry-focused, and diversified; it is quantitatively analyzed to categorize companies as dominant, leading, strong, tentative, and weak.

Recent Developments in the Ai Inference Optimization Software Market

- In March, 2025, Qualcomm Inc. launched the AI Hub, a library of over one hundred pre-optimized models, to streamline the deployment of high-performance AI on Snapdragon mobile and industrial platforms.

- In May, 2025, Modular Inc. released a significant update to its MAX platform, introducing a universal kernel library designed to optimize execution patterns across both x86 and ARM architectures for seamless cross-platform deployment.

- In September, 2025, Microsoft Corp. enhanced its ONNX Runtime with an advanced kernel optimization update, enabling the execution of large language models on standard servers through a novel 4-bit quantization strategy.

- In October, 2025, Hailo Technologies Ltd. introduced a specialized compression toolkit for the automotive sector in APAC, allowing complex vision transformers to be deployed on local hardware for advanced driver-assistance systems.

Research Analyst Overview: Ai Inference Optimization Software Market

Boardroom-level investment decisions are shifting from raw hardware acquisition to sophisticated software strategies that maximize the efficiency of existing compute infrastructure. The adoption of model quantization and knowledge distillation is no longer a niche technical exercise but a core component of managing operational expenditures.

For instance, a telecommunications firm can use neural network pruning to deploy complex network monitoring models on existing server hardware, delaying a multi-million dollar capital expenditure cycle. This move toward software-defined hardware is governed by interoperability standards like the Open Neural Network Exchange (ONNX), which ensures cross-platform ml deployment.

Key technologies such as automatic kernel generation and sparse model acceleration are enabling this shift. The ability to perform runtime acceleration and gpu inference optimization is now a critical vendor selection criterion.

This focus on computational efficiency in ai is essential for enabling real-time ai processing, particularly for low-latency llm serving and on-device machine learning applications, ultimately shaping the entire ai model deployment lifecycle.

Dive into Technavio’s robust research methodology, blending expert interviews, extensive data synthesis, and validated models for unparalleled Ai Inference Optimization Software Market insights. See full methodology.

| Market Scope | |

|---|---|

| Page number | 299 |

| Base year | 2025 |

| Historic period | 2020-2024 |

| Forecast period | 2026-2030 |

| Growth momentum & CAGR | Accelerate at a CAGR of 22.1% |

| Market growth 2026-2030 | USD 3721.2 million |

| Market structure | Fragmented |

| YoY growth 2025-2026(%) | 20.3% |

| Key countries | US, Canada, Mexico, Germany, UK, France, Italy, The Netherlands, Spain, China, Japan, India, South Korea, Australia, Indonesia, Brazil, Argentina, Colombia, Saudi Arabia, UAE, South Africa, Israel and Turkey |

| Competitive landscape | Leading Companies, Market Positioning of Companies, Competitive Strategies, and Industry Risks |

Ai Inference Optimization Software Market: Key Questions Answered in This Report

-

What is the expected growth of the Ai Inference Optimization Software Market between 2026 and 2030?

-

The Ai Inference Optimization Software Market is expected to grow by USD 3.72 billion during 2026-2030, registering a CAGR of 22.1%. Year-over-year growth in 2026 is estimated at 20.3%%. This acceleration is shaped by escalating demand for real-time processing at network edge, which is intensifying demand across multiple end-use verticals covered in the report.

-

-

What segmentation does the market report cover?

-

The report is segmented by Component (Model optimization tools, Inference runtimes and engines, Platforms and frameworks, and Services), Deployment (Cloud based, and On premises), Business Segment (Large enterprises, and Small and medium enterprises) and Geography (North America, Europe, APAC, South America, Middle East and Africa). Among these, the Model optimization tools segment is estimated to witness significant growth during the forecast period, driven by rising adoption across key application areas. Each segment includes detailed qualitative and quantitative analysis, along with historical data from 2020-2024 and forecasts through 2030 with year-over-year growth rates.

-

-

Which regions are analyzed in the report?

-

The report covers North America, Europe, APAC, South America and Middle East and Africa. North America is estimated to contribute 39.6% to market growth during the forecast period. Country-level analysis includes US, Canada, Mexico, Germany, UK, France, Italy, The Netherlands, Spain, China, Japan, India, South Korea, Australia, Indonesia, Brazil, Argentina, Colombia, Saudi Arabia, UAE, South Africa, Israel and Turkey, with dedicated market size tables and year-over-year growth for each.

-

-

What are the key growth drivers and market challenges?

-

The primary driver is escalating demand for real-time processing at network edge, which is accelerating investment and industry demand. The main challenge is persistence of hardware heterogeneity and interoperability barriers, creating operational barriers for key market participants. The report quantifies the impact of each driver and challenge across 2026 and 2030 with comparative analysis.

-

-

Who are the major players in the Ai Inference Optimization Software Market?

-

Key vendors include Advanced Micro Devices Inc., Amazon.com Inc., Blaize, Cerebras Systems Inc., Edgeimpulse Inc., Google LLC, Graphcore Ltd., Groq Inc., Hailo Technologies Ltd., Hugging Face Inc., IBM Corp., Intel Corp., Latent AI Inc., Microsoft Corp., Modular Inc., NVIDIA Corp., Qualcomm Inc., Red Hat Inc., SambaNova Systems Inc. and Syntiant Corp.. The report provides qualitative and quantitative analysis categorizing companies as dominant, leading, strong, tentative, and weak based on their market positioning. Company profiles include business segment analysis, SWOT assessment, key offerings, and recent strategic developments.

-

Ai Inference Optimization Software Market Research Insights

Market dynamics are shaped by the need to balance computational power with operational expenditure, driving adoption of specialized optimization tools. The rapid expansion of the APAC market, which is projected to grow at a CAGR of 23.1%, is compelling enterprises to find methods for reducing ai carbon footprint in line with corporate ESG mandates.

In practice, a large e-commerce provider utilizes inference optimization software to refine its recommendation engines, enabling efficient model optimization for arm cpus prevalent in its server farms. This focus on sustainable ai computing helps manage the high costs associated with scaling services.

The technical hurdles in achieving this balance, particularly the latency vs accuracy tradeoff, remain a central focus for software vendors developing the next generation of ai chipset software stacks and supporting frameworks.

We can help! Our analysts can customize this ai inference optimization software market research report to meet your requirements.

RIA -

RIA -