AI Inference Hardware Market Size 2026-2030

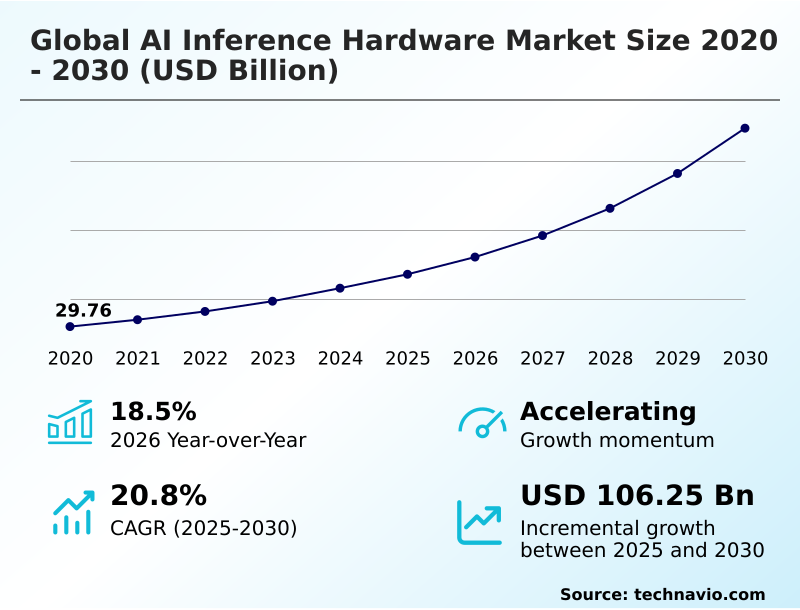

The ai inference hardware market size is valued to increase by USD 106.25 billion, at a CAGR of 20.8% from 2025 to 2030. Escalating demand for edge computing capabilities across industries will drive the ai inference hardware market.

Major Market Trends & Insights

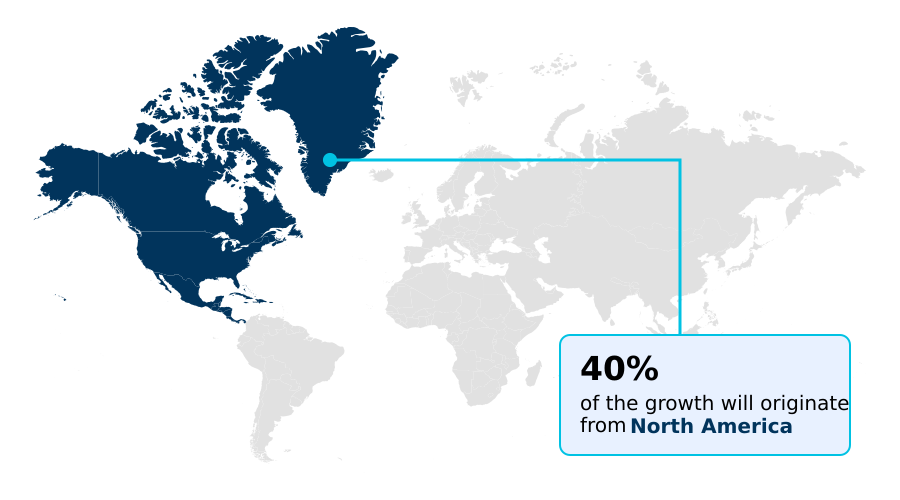

- North America dominated the market and accounted for a 39.8% growth during the forecast period.

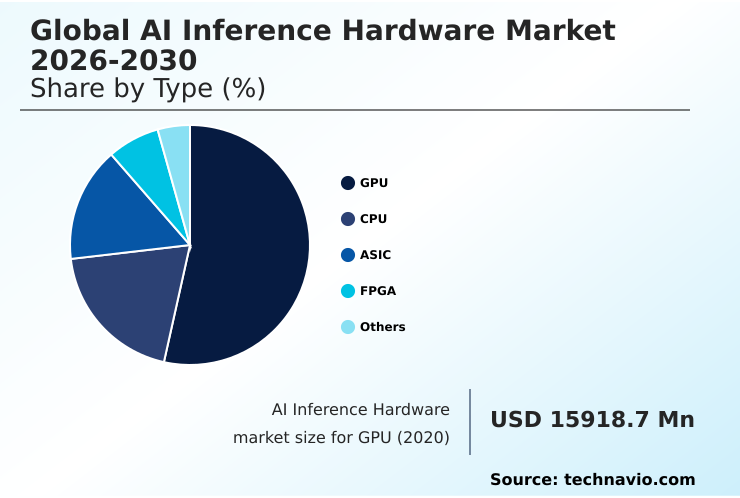

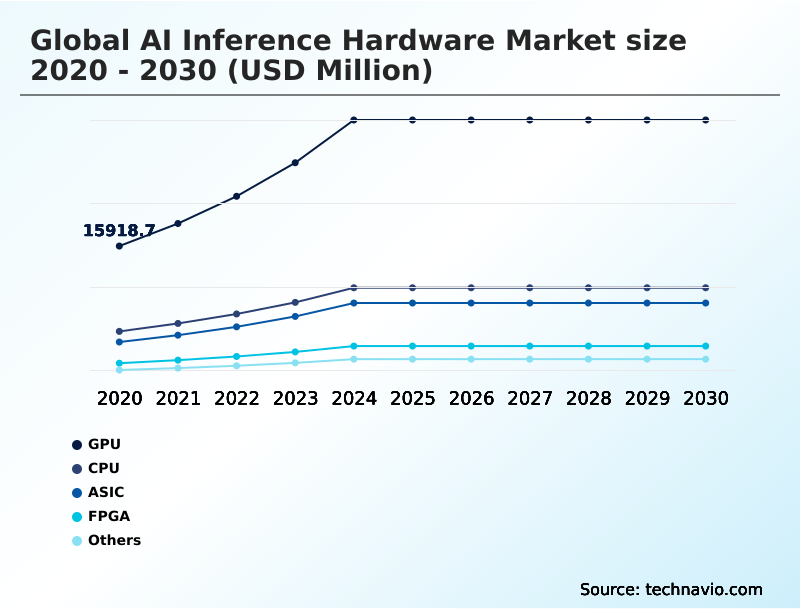

- By Type - GPU segment was valued at USD 30.79 billion in 2024

- By Deployment - Cloud inference segment accounted for the largest market revenue share in 2024

Market Size & Forecast

- Market Opportunities: USD 144.29 billion

- Market Future Opportunities: USD 106.25 billion

- CAGR from 2025 to 2030 : 20.8%

Market Summary

- The AI inference hardware market is defined by the proliferation of specialized physical components designed to execute pre-trained machine learning models efficiently. Unlike training systems, this hardware focuses on real-time decision-making, optimizing for low-latency inference and high-throughput processing. Key drivers include the escalating demand for localized data processing in edge computing environments and the computational requirements of generative AI workloads.

- For instance, in supply chain management, on-premises AI hardware is used for predictive maintenance hardware, analyzing sensor data to forecast equipment failures and reduce downtime. A primary trend is the shift from general-purpose chips to application-specific integrated circuits (ASICs) and other custom silicon designs, which deliver superior performance per watt.

- This move toward energy-efficient data processing addresses both cost and sustainability concerns. However, the high initial capital investment and the rapid evolution of AI algorithms, which can render specialized hardware obsolete, pose significant challenges to widespread adoption.

- The market's dynamism is further shaped by innovations like chiplet-based architectures and silicon photonics, aimed at overcoming memory wall solutions and data transfer bottlenecks in next-generation AI inference servers.

What will be the Size of the AI Inference Hardware Market during the forecast period?

Get Key Insights on Market Forecast (PDF) Request Free Sample

How is the AI Inference Hardware Market Segmented?

The ai inference hardware industry research report provides comprehensive data (region-wise segment analysis), with forecasts and estimates in "USD million" for the period 2026-2030, as well as historical data from 2020-2024 for the following segments.

- Type

- GPU

- CPU

- ASIC

- FPGA

- Others

- Deployment

- Cloud inference

- Edge inference

- On-premises

- Application

- Computer vision

- Robotics

- Generative AI

- NLP

- Others

- Geography

- North America

- US

- Canada

- Mexico

- Europe

- Germany

- UK

- France

- APAC

- China

- Japan

- India

- Middle East and Africa

- UAE

- Saudi Arabia

- Israel

- South America

- Brazil

- Argentina

- Rest of World (ROW)

- North America

By Type Insights

The gpu segment is estimated to witness significant growth during the forecast period.

Graphics processing units (GPUs) remain foundational accelerated computing platforms for AI hardware for data centers, excelling at inference workload optimization.

Their parallel structure is ideal for matrix multiplication acceleration, crucial for both convolutional neural networks (CNNs) and advanced transformer model acceleration. Modern designs incorporate high-bandwidth memory (HBM) to feed processing cores, maximizing throughput for real-time data processing hardware.

To suit dense server environments, manufacturers offer specialized variants that adhere to strict thermal design power (TDP) limits.

The software ecosystem enables rapid deployment of scalable compute solutions for tasks like AI hardware for predictive analytics, with some system-on-chip (SoC) architectures achieving a 25% improvement in data pipeline efficiency.

The GPU segment was valued at USD 30.79 billion in 2024 and showed a gradual increase during the forecast period.

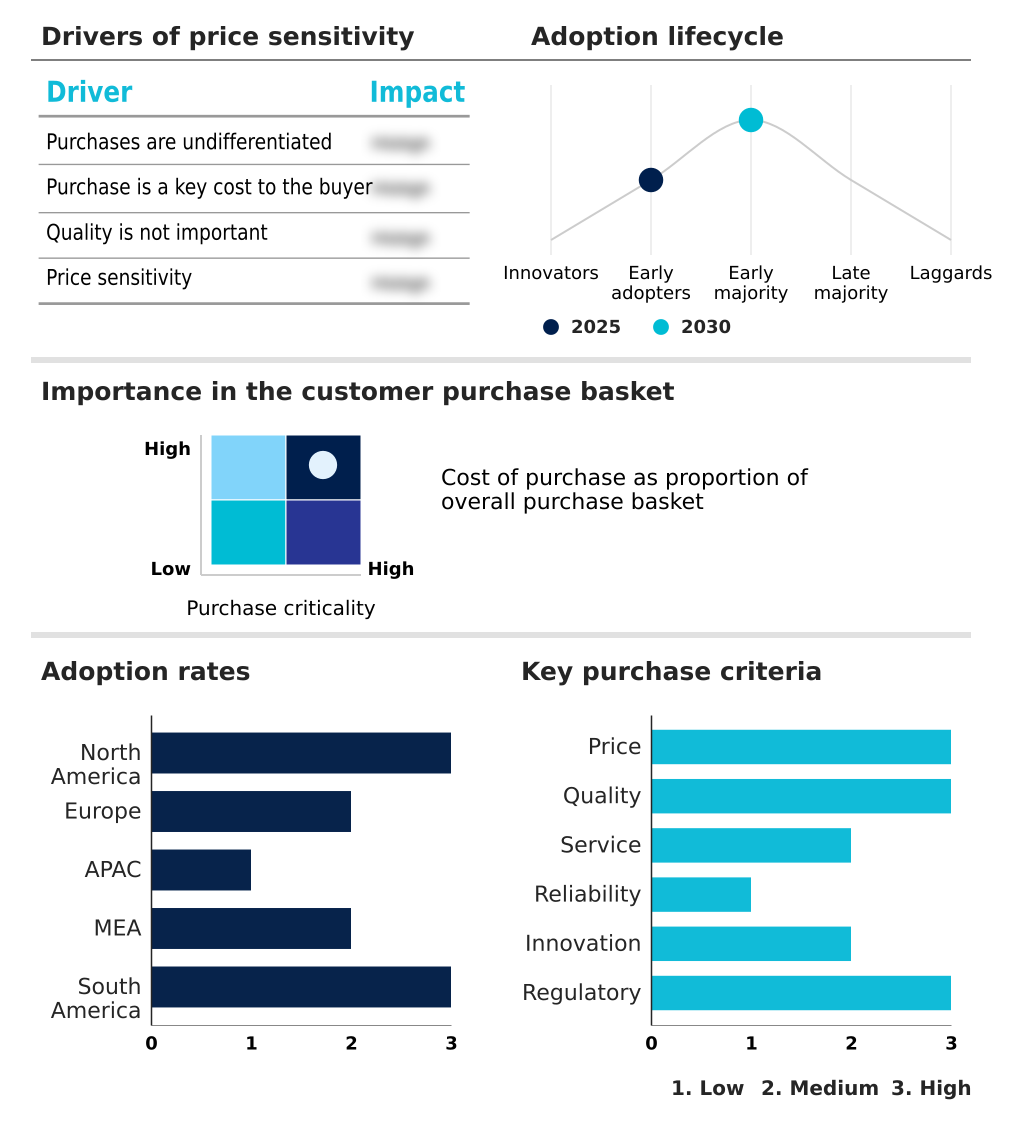

Regional Analysis

North America is estimated to contribute 39.8% to the growth of the global market during the forecast period.Technavio’s analysts have elaborately explained the regional trends and drivers that shape the market during the forecast period.

See How AI Inference Hardware Market Demand is Rising in North America Request Free Sample

North America leads in deploying AI inference servers for cloud inference optimization, capturing nearly 40% of the market's incremental growth, driven by hyperscale data centers. These facilities prioritize high-throughput processing using specialized inference accelerators.

In APAC, which is the fastest-growing region with expansion rates exceeding 21%, the focus is on manufacturing and deploying hardware for AI for smart cities and AI hardware for autonomous systems.

European adoption centers on industrial applications and on-premises AI hardware to meet data sovereignty rules, demanding deterministic latency hardware.

The development of neural processing units (NPUs) and hardware for vision transformer architectures is global, with systolic array processing techniques being key to performance gains in AI hardware for computer vision across scalable compute solutions.

Market Dynamics

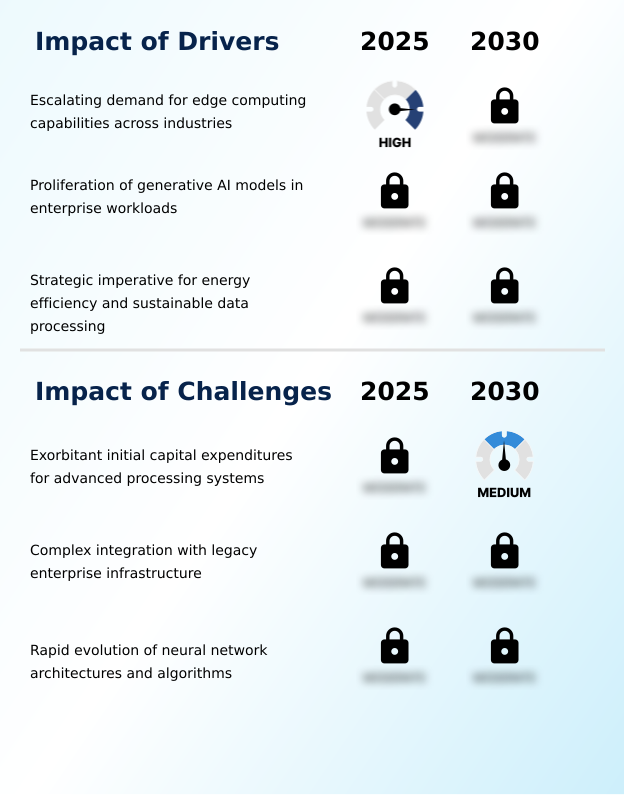

Our researchers analyzed the data with 2025 as the base year, along with the key drivers, trends, and challenges. A holistic analysis of drivers will help companies refine their marketing strategies to gain a competitive advantage.

- A critical consideration for enterprises is the total cost of AI inference hardware deployment, which extends beyond the initial purchase to include operational expenses. Integrating AI hardware with legacy systems often presents significant hurdles, complicating the path to modernization.

- The constant impact of AI algorithm evolution on hardware creates a risk of rapid obsolescence, making the performance comparison of GPU vs ASIC for inference a crucial decision point for long-term ROI.

- For many, energy efficiency in AI data centers is paramount, driving the adoption of solutions that promise lower power consumption of AI inference servers and effective thermal management in AI hardware design. The demand for low latency hardware for edge computing is surging, especially for AI inference hardware for autonomous driving and real time computer vision.

- In this context, chiplet architecture benefits for AI accelerators and the use of silicon photonics for AI bandwidth bottlenecks are key future trends in AI accelerator technology. The debate between on-premises vs cloud AI inference hardware continues, with each model offering distinct advantages for scalability and security.

- Ultimately, the hardware requirements for large language models and generative AI content creation are pushing the boundaries of current technology, forcing a reevaluation of how to build scalable AI hardware for enterprise use while optimizing hardware for transformer models. This dynamic market saw year-over-year growth exceeding 18%, underscoring the relentless pace of adoption.

What are the key market drivers leading to the rise in the adoption of AI Inference Hardware Industry?

- The escalating demand for edge computing capabilities across industries, which require immediate and localized data analysis, is a primary driver propelling the market forward.

- The demand for energy-efficient data processing is a primary market driver, pushing for sustainable computing architectures with superior performance per watt. This is critical for both large-scale deep learning inference and localized data processing in edge computing environments.

- The need for low-latency inference in applications like AI for industrial automation is fueling investment in edge AI acceleration and decentralized computational power.

- Concurrently, the explosion of generative AI workloads and the specific generative AI hardware requirements for large language model (LLM) inference are creating immense demand for specialized accelerators.

- This trend has renewed interest in designs based on neuromorphic computing principles, which promise to lower energy consumption for complex tasks by over 60%.

What are the market trends shaping the AI Inference Hardware Industry?

- A key trend shaping the market is the accelerated shift toward application-specific integrated circuits for inference workloads. This move is driven by the need for enhanced operational efficiency and performance optimization in large-scale deployments.

- The market is undergoing a significant transition toward custom silicon design, with application-specific integrated circuits (ASICs) offering superior performance. Organizations are adopting chiplet-based architectures and advanced packaging technologies, which improve manufacturing yields by up to 15% by combining components from different semiconductor manufacturing nodes. This heterogeneous computing integration addresses the economic challenges of monolithic designs.

- To combat data transfer bottlenecks, the industry is integrating silicon photonics and co-packaged optics, enabling disaggregated data center resources. This optical interconnect technology is a key memory wall solution. Meanwhile, field-programmable gate arrays (FPGAs), configurable via hardware description languages, provide an adaptable alternative, allowing hardware reconfiguration that reduces redesign cycles by 40%.

What challenges does the AI Inference Hardware Industry face during its growth?

- The exorbitant initial capital expenditure required for acquiring and deploying advanced processing systems remains a key challenge affecting widespread market adoption.

- Integrating modern hardware with legacy systems, often reliant on central processing units (CPUs), poses a significant challenge, sometimes increasing deployment costs by 45%. The rapid evolution of algorithms creates a risk of obsolescence for specialized hardware like tensor processing units (TPUs) and language processing units (LPUs).

- Even with advancements like high-level synthesis tools, programming unique architectures such as a reconfigurable dataflow unit (RDU) or a wafer-scale engine requires niche expertise. For developers of embedded AI systems, including robotics compute modules, deploying RISC-V based AI accelerators for low-power AI vision applications can be complex.

- This complexity can delay product launches for in-cabin monitoring systems and predictive maintenance hardware by up to six months.

Exclusive Technavio Analysis on Customer Landscape

The ai inference hardware market forecasting report includes the adoption lifecycle of the market, covering from the innovator’s stage to the laggard’s stage. It focuses on adoption rates in different regions based on penetration. Furthermore, the ai inference hardware market report also includes key purchase criteria and drivers of price sensitivity to help companies evaluate and develop their market growth analysis strategies.

Customer Landscape of AI Inference Hardware Industry

Competitive Landscape

Companies are implementing various strategies, such as strategic alliances, ai inference hardware market forecast, partnerships, mergers and acquisitions, geographical expansion, and product/service launches, to enhance their presence in the industry.

Advanced Micro Devices Inc. - Core offerings include custom silicon and specialized accelerators engineered for high-throughput, energy-efficient processing of complex AI inference workloads in cloud, edge, and on-device environments.

The industry research and growth report includes detailed analyses of the competitive landscape of the market and information about key companies, including:

- Advanced Micro Devices Inc.

- Amazon.com Inc.

- Apple Inc.

- Arm Ltd.

- Cerebras

- Google LLC

- Groq Inc.

- Hailo Technologies Ltd.

- IBM Corp.

- Intel Corp.

- Kneron Inc.

- MediaTek Inc.

- NVIDIA Corp.

- NXP Semiconductors NV

- Qualcomm Inc.

- SambaNova Systems Inc.

- SiMa Technologies Inc.

- STMicroelectronics NV

- Syntiant Corp.

- Tenstorrent Inc.

Qualitative and quantitative analysis of companies has been conducted to help clients understand the wider business environment as well as the strengths and weaknesses of key industry players. Data is qualitatively analyzed to categorize companies as pure play, category-focused, industry-focused, and diversified; it is quantitatively analyzed to categorize companies as dominant, leading, strong, tentative, and weak.

Recent Development and News in Ai inference hardware market

- In November 2024, Qualcomm Technologies, Inc. announced its next-generation AI inference-optimized solutions for data centers, including its new accelerator cards and rack-scale systems designed for cloud workloads.

- In September 2024, Dell introduced a new high-density server, positioning it as an AI infrastructure solution for enterprise and cloud deployments.

- In January 2025, NVIDIA and Intel Corporation revealed a strategic collaboration to co-develop custom data center and PC products, aiming to accelerate computing workloads across various markets.

- In April 2025, AMD and OpenAI announced a strategic partnership to scale AI infrastructure capacity, involving the deployment of AMD GPU-based compute systems for large-scale AI inference and training.

Dive into Technavio’s robust research methodology, blending expert interviews, extensive data synthesis, and validated models for unparalleled AI Inference Hardware Market insights. See full methodology.

| Market Scope | |

|---|---|

| Page number | 314 |

| Base year | 2025 |

| Historic period | 2020-2024 |

| Forecast period | 2026-2030 |

| Growth momentum & CAGR | Accelerate at a CAGR of 20.8% |

| Market growth 2026-2030 | USD 106251.6 million |

| Market structure | Fragmented |

| YoY growth 2025-2026(%) | 18.5% |

| Key countries | US, Canada, Mexico, Germany, UK, France, The Netherlands, Sweden, Spain, China, Japan, India, South Korea, Taiwan, Indonesia, UAE, Saudi Arabia, Israel, South Africa, Qatar, Brazil, Argentina and Chile |

| Competitive landscape | Leading Companies, Market Positioning of Companies, Competitive Strategies, and Industry Risks |

Research Analyst Overview

- The AI inference hardware landscape is rapidly evolving beyond general-purpose central processing units (CPUs) and graphics processing units (GPUs). Boardroom decisions on technology investment now center on the strategic adoption of specialized hardware, including application-specific integrated circuits (ASICs) and tensor processing units (TPUs). The goal is to achieve superior performance per watt and low-latency inference for deep learning inference workloads.

- Innovations such as chiplet-based architectures and advanced packaging technologies are becoming standard, while silicon photonics and co-packaged optics address critical data bottlenecks. Hardware for transformer model acceleration is crucial, with some specialized chips delivering a 40% reduction in processing time.

- For edge AI acceleration, field-programmable gate arrays (FPGAs) and neural processing units (NPUs) on system-on-chip (SoC) architectures offer reconfigurable solutions, even as the industry explores designs like the wafer-scale engine and reconfigurable dataflow unit (RDU).

What are the Key Data Covered in this AI Inference Hardware Market Research and Growth Report?

-

What is the expected growth of the AI Inference Hardware Market between 2026 and 2030?

-

USD 106.25 billion, at a CAGR of 20.8%

-

-

What segmentation does the market report cover?

-

The report is segmented by Type (GPU, CPU, ASIC, FPGA, and Others), Deployment (Cloud inference, Edge inference, and On-premises), Application (Computer vision, Robotics, Generative AI, NLP, and Others) and Geography (North America, Europe, APAC, Middle East and Africa, South America)

-

-

Which regions are analyzed in the report?

-

North America, Europe, APAC, Middle East and Africa and South America

-

-

What are the key growth drivers and market challenges?

-

Escalating demand for edge computing capabilities across industries, Exorbitant initial capital expenditures for advanced processing systems

-

-

Who are the major players in the AI Inference Hardware Market?

-

Advanced Micro Devices Inc., Amazon.com Inc., Apple Inc., Arm Ltd., Cerebras, Google LLC, Groq Inc., Hailo Technologies Ltd., IBM Corp., Intel Corp., Kneron Inc., MediaTek Inc., NVIDIA Corp., NXP Semiconductors NV, Qualcomm Inc., SambaNova Systems Inc., SiMa Technologies Inc., STMicroelectronics NV, Syntiant Corp. and Tenstorrent Inc.

-

Market Research Insights

- The market's dynamics are shaped by a strategic shift toward decentralized computational power, with adoption in edge computing environments reducing data processing latency by up to 70%. This move to localized data processing is critical for real-time applications like AI hardware for autonomous systems and in-cabin monitoring systems.

- The focus on sustainable computing architectures has led to specialized inference accelerators that improve energy-efficient data processing, with some custom silicon designs achieving a 40% better performance-per-watt ratio compared to legacy hardware. As enterprises scale AI for industrial automation and predictive analytics, optimizing inference workloads is paramount.

- This has resulted in a clear preference for hardware that supports deterministic latency, a non-negotiable for robotics compute modules, where a 25% reduction in response time can prevent operational failures.

We can help! Our analysts can customize this ai inference hardware market research report to meet your requirements.

RIA -

RIA -